IT Assorti

blog by Vasyl Mylko

Tag Archives: future

10,000x faster

We wanted to know it

Since mankind developped some good intelligence, we [people] immediately started to discover our world. We walked by foot until we could reach. We domesticated big animals – horses – and rode horses to reach even further, horizontally and vertically. So we reached the water. Horses could not bring us across the seas and oceans. We had to create new technology, that could carry people above the water – ships.

Fantasy map of a flat earth — Image by © Antar Dayal/Illustration Works/Corbis

Ship building required pretty much calculation itself. And ship only is not sufficient to get there. Some navigation needed. We developped both measurement and calulcation of wood and nails, measurement of time, navigation by stars and sides of the world. That was kind of computing. Not the earliest computing ever, but good enough computing that let us to spread the knowledge and vision of our [flat] world.

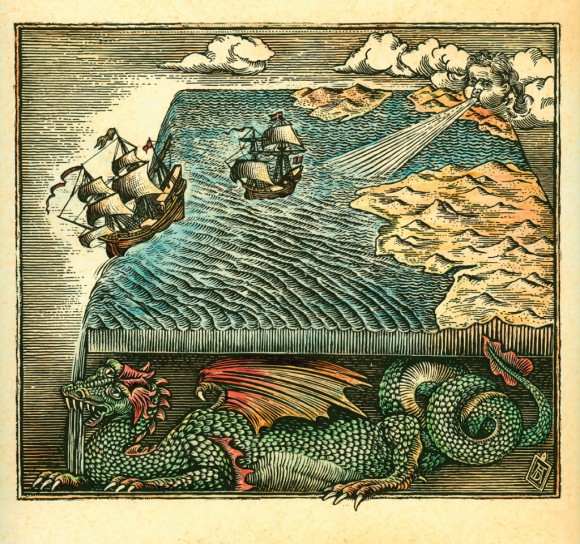

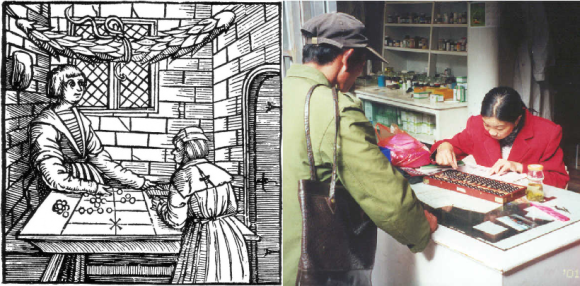

Wooden computing

Early device for computing was abacus. Though it is usually called a calculating tool or counting frame, we use word computing, becuse this topic is about computing technology. Abacus as computing technology was designed with size bigger than a man, and smaller than a room. Then the wooden computing technology miniaturized to desktop size. This is important: emerged at the size between 1 and 10 meters, and got smaller in time to fit onto dektop. We could call it manual wooden computing too. Wooden computing technology is still in use nowadays in African countries, China, Russia.

Mechanical computing

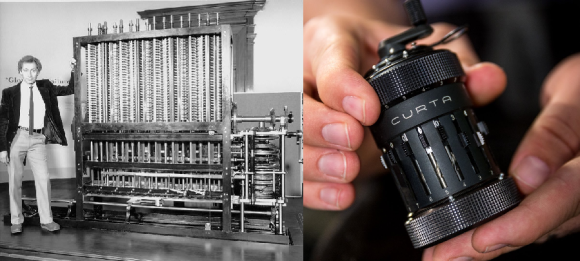

Metal computing emerged after wooden. Charles Babbage designed his analytical engine from metal gears, to be more precise – from Leibniz wheel. That animal was bigger than a man, and smaller than a room. Below is a juxtaposition of inventor himself with his creation (on the left). Metal computing technology miniaturized in time, and fit into a hand.

Curt Herzstark made really small mechanical calculator, named it Curta (on the right). Curta also lived long, well into the mid of XX century. Nowadays Curta is favorite collectible, priced at $1,000 minimum on eBay, while majority of price tags are around $10,000 for good working device, built in Lichtenstein.

Electro-mechanical computing

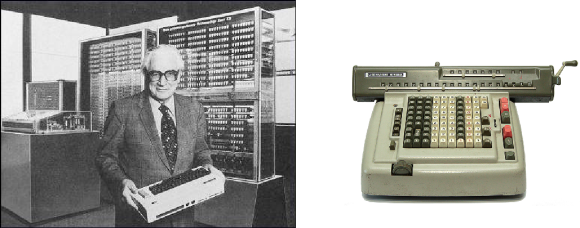

Babbage machine became a gym device, when Konrad Zuse designed first fully automatic electro-mechanical machine Z3. Clock speed was 5-10Hz. Z3 was used to model flatter effect for military aircrafts in Nazi Germany. And first Z3 was destroyed during bombardment. Z3 was bigger than a man, and smaller than a room (left photo). Then electro-mechanical computing miniaturized to desktop size, e.g. Lagomarsino semi-automatic calculating machine (right photo).

Here something new happened – growth beyond the size of a room. Harvard Mark I was big electro-mechanical machine, put in big hall. Mark I served for Manhattan Project. There was a problem, how to detonate atomic bomb. Well known von Neumann computed explosive lens on it. Mark I was funded by IBM, Watson Sr.

So, electro-mechanical computing started from the size bigger than a man, smaller than a room, and then evolved in two directions: miniaturized to desktop size, and grown to small stadium size.

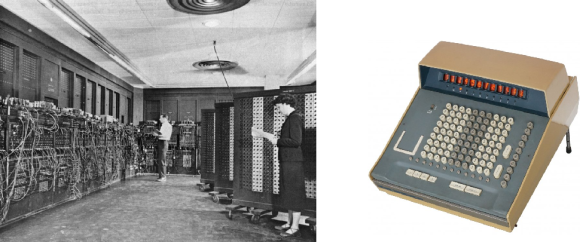

Electrical Vacuum Tube computing

At some point, mechanical parts were redesigned to electrical, and first fully electrical machine was created – ENIAC. It used vaccum tubes. Its size was bigger than a man, smaller than a big room (left photo). The fully electrical computing technology on vacuum tubes got miniaturized to desktop size (right photo).

Very interesting and beautiful was miniaturization. Even vacuum tubes could be small and nice. Furthermore, there were many women in the indutry at the time of electrical vacuum tube computing. Below are famous “ENIAC girls”, with the evidence of miniaturization of modules, from left to right, smaller is better. Side question: why women left programming?

ENIAC was very difficult to program. Here is tutorial how to code the modulo function. There were six programmers who could do it really well. ENIAC was intended for balistic computing. But well known same von Neumann from atomic bomb project, got access to it and ordered first ten programs for hydrogen bomb.

Fully automatic electrical machines grew big, very big, bigger than Mark I, II, III etc. They were used for military purposes, and space programs. IBM SAGE on photo, its size is like mid stadium.

Electrical Transistor computing

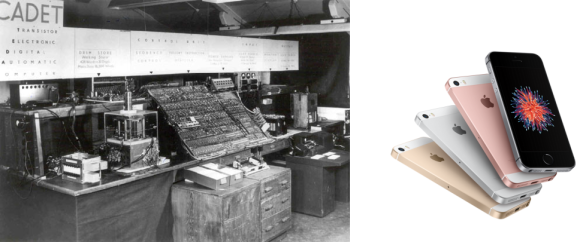

First fully transistor machine was build probably by IBM, though there is photo of European [second] machine, called CADET (left photo). There were no vacuum tubes in it anymore. Transistor technology is till alive, very well miniaturized to desktop and hand (right photo).

Miniaturization of transistor computing went even further, than size of the hand. Think of small contact lens, small robots in veins, brain implants, spy devices and so on. And transistors are getting smaller and smaller, today 14nm is not a big deal. There is dozen of silicon foundries capable of doing FinFET at such scale.

Transistor computers grew really big, to the size of the stadium. The Earth is being covered by data centers, sized as multiple stadiums. It’s Titan computer on photo, capable of crunching data at the rate of 10 petaFLOPS. The most powerful supercomputer today is Chinese Sunway TaihuLight at 34 petaFLOPS.

But let me remind the point: electrical transistor computing was designed at the size bigger than a man, smaller than a room, and then evolved into tiny robots, and huge supercomputers.

Quantum computing

Designed at the size bigger than a man, smaller than a room.

Everything is a fridge. The magic happens at the edge of that vertical structure, framed by the doorway, 1 meter above the floor. There is a silicon chip, designed by D:Wave, built by Cypress Semiconductor, cooled to absolute zero temperature (-273C). Superconductivity emerges. Quantum physics start its magic. All you need is to shape your problem to the one that quantum machine could run.

It’s somewhat complicated excercise, like modulo function for first fully automatic electrical machines on vacuum tubes years ago. But it is possible. You got to take your time, paper and pen/pencil, and bring your problem to the equivalent Ising model. Then it is easy: give input to quantum machine, switch on, switch off, take output. Do not watch when machine is on, because you will kill the wave features of particles.

Today, D:Wave solves problems 10,000x faster than transistor machines. There is potential to make it 50,000x faster. Cool times ahead!

Motivation

Why do we need such huge computing capabilities? Who cares? I personally care. Maybe others similar to me, me similar to them. I want to know who we are, what is the world, and what it’s all about.

The Nature does not compute the way we do with transistor machines. As my R&D colleague said about a piece of metal: “You raise the temperature, and solid brick of metal instantly goes liquid. Nature computes it at atomic level, and does it very very fast.” Today one of Chinese supercomputers Tianhe-1A computed behavior of 110 billion atoms during 500,000 evolutions… Is it much? It was only 0.1 nanosecond corresponding to real time, done in three hours of computing.

Let’s do another comparison for same number of atoms. It was about 10^11 atoms. If it was computed at the rate of 1 millisecond, then it would be only 500 seconds, less than 10 minutes. My body has 10 trillions molecules, or about 10^28 atoms. Hence, to simulate entire me during 10 minutes at the level of individual atoms, we would need 10^18x more Tianhe-1A supercomputers… Obviously our current computing is wrong way of computing. Need to invent further. But to invent further, we have to adopt new way of computing – quantum computing.

Who needs such simulations? Here is counter question – what is Intelligence? Intelligence is our capability to predict the future (Michio Kaku). We could compute the future at atomic level and know it for sure. The stronger intelligence is, the more detailed and precise our vision into the future is. As we know the past, and know the future, the understanding of time changes. With really powerful computing, we know for sure what will be in the future as accurately as we know what happened in the past. Distant future is more complicated to compute as distance past. But it is possible, and this is what Intelligence does. It uses computing to know the time. And move in time. In both directions.

Conclusion

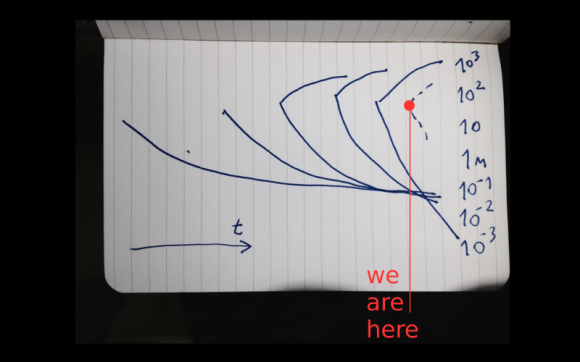

All computing technologies together, on one graph, show some pattern. Horizontaly we have time, from past (left) to future (right). Vertically we have scale of sizes, logarithmic, in meters. Red dot shows quantum computing. It is designed already, bigger than a man, smaller than a room. Upper limits are projected bigger than modern transistor supercomputers. Lower is unknown. It’s OK that both transistor and quantum computing technologies coexist and complement each other for a while.

All right, take a look at those charts, imagine quantum lines continuation, what do you see? It is Software is eating the World. Dragon’s tail is on the left, body is in the middle, and the huge mouth is on the right. And this Software Dragon is eating ourselves at all scales. Somebody calls it Digitization.

Software is eating the World, guys. And it’s OK. Right now we could do 10,000x faster computing on quantum machines. Soon we’ll be able to do 50,000x faster. Intelligence is evolving – our ability to see the future and the past. Our pathway to time machine.

We will fuck robots

We already love machines

If you have a car, and you don’t use any name to talk to you car, then there are two reasons: either your car is just another soul-less car, or your are special. Usually every car owner loves her/his car and uses some name for it. If you car is under-powered, you would ask her: “come on, come on car_name”, when climbing the ascending road. So you are already talking to the machines, at least to one machine – your car.

The same it true for the boat, small air boat or bigger luxurious one. Obviously it is true for the bikes. Maybe now it is emerging for the flying drones. Interesting how employees who still work at warehouses call those machines there… Probably they don’t love them. But the fact is that we love our lifestyle machines: cars, bikes, boats, drones.

Exponential technologies

There is technological acceleration nowadays. It is most visible on 8 technologies (check Abundance and Singularity University for deeper details):

* biotech and bioinformatics

* computational systems

* networks and sensors

* artificial intelligence

* robotics

* digital manufacturing

* medicine

* nanotech and nanomaterials

It is very interesting to dig into each of them, or combine them. But it is not a purpose on this post. There are two others, or just Big Two, as an umbrella on those eight: military and porn. Many researches are applied in military first, then go to other industries and lifestyle. Many technological problems are solved in porn, and many opportunities are created there.

Connecting the dots

Materials are needed to reach realistic experience, to transcend from dolls to human peers. New Turing test – you smell and touch the skin, hair, and you cannot distinguish between natural/organic and artificial. Maybe we could program existing cells to grow and behave slightly different, or we will invent new synthetic materials that will be indistinguishable from organic. Or hybrids why not? Probably new materials will self assemble or be manufactured at atomic level by new 3D printers, to connect the right atoms in to right atomic grids. A lot depends on the connection order: diamonds and ashes are from same Carbon atoms. The bottom line is that biotech and nanomaterials and 3D printing are well empowering creation of cyberskin with realistic experience.

Computational systems, robotics and artificial intelligence is what’s behind the scenes [read behind the skin]. Having joints is not enough. All those artificial bones and ligaments must be orchestrated perfectly. It is all about real-time computing. Energy efficient real-time computing, to avoid any sticking wires externally, or plenty of heat or noise released. Everything should be smart enough to be packed into the known volume, to have known weight, center of mass, have known temperature, and bend and move realistically. Emotional intelligence is important, to have adequate mental reaction between human and machine.

Rudimentary evidence

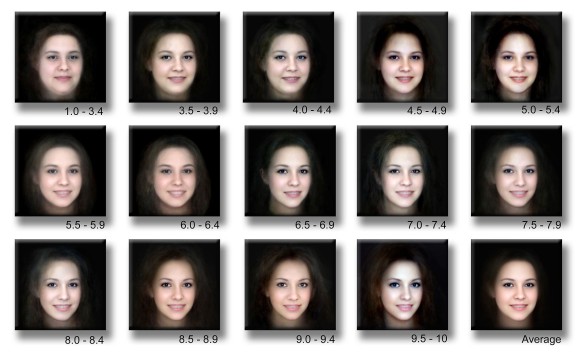

Sex dolls, dildos and travel pussies exist for years. Porn stars have official copies of their realistic genitals and dolls sold. Porn stars might be OK that somebody fucks their rubber shadows. What cannot be said by other celebrities, because of privacy, ethics, moral. There is some real knowledge of beauty. Female face research, published in Brain Research, as hypothalamus reaction. Something similar should be available for male faces. And not only faces, for the body, for the voice, for the smell, manners etc. All that could be grasped by measurements and machine learning. At the end we need some classification like those beautiful faces, to know what exists, and then figure out how to use them.

Breakthrough

There will be just better sex dolls, indistinguishable from people. Turing sex test will be passed between the legs. Caleb could try it with Ava, and he wanted to, he truly fell in love with Ava [Ex Machina]. That’s just a question of time. What is interesting, how we are going to control/prevent emergence of the copies of ourselves. OK, may be there is no big demand for copies of you, but there will be big demand for copies of celebrities. And celebrities may not be happy that somebody fucks their realistic clones. That will go underground, and will grow behind the law. Probably there will be countries or territories world wide capitalizing on this sex heavens, like some did as tax heavens. We will have sexual intercourse with robots like Deckard had with Rachael [Blade Runner, Los Angeles 2019], full of emotions and feelings for both sides. And enough people will do sex tourism for the forbidden fruit – to fuck their favorite peers and celebrities. Maybe there will be on-demand manufacturing of the sex clone of everybody via pictures & videos from their social traces… This is how ultimate experience in porn will evolve. People will fuck their lovely robots.

Transformation of Consumption

Some time ago I’ve posted on Six Graphs of Big Data and mentioned Consumption Graph there. Then I presented Five Sources of Big Data on the data-aware conference, mentioned how retailers track people (time, movement, sex, age, goods etc.) and felt the keen interest from the audience about Consumption Data Source. Since that time I’ve thought a lot about consumption ‘as is’. Recently I’ve paid attention to the glimpses of the impact onto old model from micro-entrepreneurs, who 3D-prints at home and sell on Etsy. Today I want to reveal more about all that as consumption and its transformation. It will be much less about Big Data but much more about mid term future of Economics.

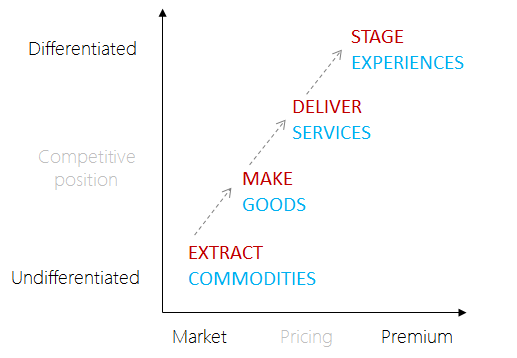

The Experience Economy

It was mentioned 15 years ago. The experience economy was identified as the next economy following the agrarian economy, the industrial economy, and the most recent service economy. Here is a link to 1998 Harvard Business Review, “Welcome to the Experience Economy”. Guys did an excellent job by predicting the progression of economic value. The experience is a real thing, like hard goods. Recall your feelings when you are back to the favorite restaurant where you order without looking into the menu. You got there to repeat the experience. Hence modern consumption is staged as experience, from the services and goods. Personal experience is even better. Services and goods without staging are getting weaker… Below is a diagram of the progression of economic value.

It would be useful to compare the transformation by multiple parameters such as model, function, offering, supply, seller, buyer and demand. The credit goes to HBR. I have improved the readability of the table in comparison to their. There is a clear trend towards experience and personalization. Pay attention to the rightmost column, because it will be addressed in more details later in this post. To make it more familiar and friendly for you, I’ll appeal to your memories again: recall your visits to Starbucks or McDonalds. What is a driving force behind your will? How have you gained that internal feeling over past periods? Multiple other samples are available, especially from the leisure and hospitality industry. Pioneers of new economics are there already, others are joining the league. And yes… people are moving towards those fat guys from WALL-E movie…

The Invisible Economy

Staging experience is not enough. Starbucks provides multiple coffee blends, Apple provides multiple gadgets and even colors. But it is not enough. I am an example. I need curved phone (suitable for my butt shape, because I keep it in the back pocket). Furthermore, I need a bendable phone, friendly for sitting whet it’s in the pocket. While majority of manufacturers-providers are ignoring it, LG is planning something. Let’s see what it will be, there is evidence of curved and flexible one. But I am not alone with my personal [strange?] wills. Others are dreaming of other things. Big guys may not be nimble enough to catch the pace of transforming and accelerated demand. It’s cool to be able to select colors for New Balance 993 or 574, but it’s not enough. My foot is different that yours, I need more exclusivity (towards usability and sustainability) than just colors. Why not to use some kind of digitizer to scan my foot and then deliver my personal shoes?

“The holy place is never empty” is my free word translation of Ukrainian proverb. It means that opportunity overlooked by current guys is fulfilled by others, new comers. There is a rising army of craftsmen and artists producing at home (manually of on 3D printers) and selling on Etsy. Fast Company has a great insight on that: “… Micro-entrepreneurs are doing something so nontraditional we don’t even know how to measure it…” There are bigger communities, like Ponoko. It is new breed of doers, called fabricators. And Ponoko is a new breed of the environment, where they meet, design, make, sell, buy and interact. The conclusion here is straightforward – our demand is fulfilled by new guys and in different way we used to. You can preview 3D model or simulation being thousand miles away and your thing will be delivered to your door. You can design your own thing. They can design for you and so on. And this economy is growing. Hey, big guys, it’s a threat for you!

The most existing in economy transformation is a foreseen death of banks. Sometimes banks are good, but in majority of modern cases they are bad. We don’t need Wells Fargo and similar dinosaurs. Amazon, Google, Apple, PayPal could perform the same functions more efficiently and make less evil to the people. There are emerging alternatives [to banks] how to fund initiatives, exchange funds between each other. Kickstarter and JumpStartFund are on the rise. Even for very serious projects like Hyperloop. Those things are still small (that’s why the section is called Invisible), but they are gaining the momentum and will hit the overall economy quite soon and heavy, less than in five years.

3D Printing

Here we are, taking digital goods and printing them into hard goods. Still early stage, but very promising and accelerating. MakerBot Replicator costs $2,199 which is affordable for personal use. There is a model priced at $2,799, which is still qualified for personal use. What does it mean for consumption? The World is being digitized. We are creating a digital copy of our world, everything is digitized and virtualized. Then digital can be implemented in the physical (hard good) on 3D printer. There are very serious 3D printers by Solid Concepts, that are capable to print the metal gun, which survives 500 round torture test. As soon as internal structure at molecular level is recreated and we achieve identical material characteristics, the question left is about cost reduction for the technology. As soon as 3D printing is cheap, we are there, in new exciting economy.

Let’s review other, more useful application of technology than guns. We eat to live, entertain to live good, and we cure diseases (which sometimes happen because of lifestyle and food). So, food first. 3D printed meat is already a reality. Meat is printed on bioprinter. Guess who funded the research? Sergey Brin, the googler. Modern Meadow creates leather and meat without slaughtering the animals. Next is health. The problem of waiting lists for organ exchange is ending. Your organs will be 3D printed. It is better than transplant because of no immune risks anymore. And finally, drugs. Recall pandemic situations with flue. Why you have to wait for vaccine for a week? You can 3D print your drugs from the digital model instantly, as soon as you download the digital model over the Internet. Downloaded and printed drugs is additional argument for Personalized Medicine in my recent post on the Next Five Years of Healthcare. I assume that answering essential application of technology to the basic aspects of life such as food, lifestyle and healthcare is sufficient to start taking it [technology] seriously. You can guess for other less life-critical applications yourself.

4D Printing

3D printing is on the rise, but there is even more powerful technology, called 4D printing. Fourth dimension is delayed in time and is related to the common environment characteristics such as temperature, water or some more specific like chemical. When external impact is applied, the 3D-printed strand folds into new structure, hence it uses its 4th dimension. It is very similar to the protein folding. There are tools for design of 4D things. One of them is cadnano for three-dimensional DNA origami nanostructures. It gives certainty of the stability of the designed structures. Another tool is Cyborg by Autodesk. It’s set of tools for modeling, simulation and multi-objective design optimization. Cyborg allows creation of specialized design platforms specific for the domains, from nanoparticle design to tissue engineering, to self-assembling human-scale manufacturing. Check out this excellent introduction into self-assembly and 4D printing by Skylar Tibbits from MIT Media Lab:

Forecast [on Consumption]

We will complete digitization of everything. This should be obvious for you at this stage. If not, then check out slightly different view on what Kevin Kelly called The One. No bits will live outside of the one distributed self-healing digital environment. Actually it will be us, digital copy of us. Data-wise it will be All Data together. Second reference will be to James Burke, who predicted the rise of PCs, in-vitro fertilization and cheap air travel in far 1973. Recently Burke admitted: “…The hardest factor to incorporate into my prediction, however, is that the future is no longer what it has always been: more of the same, but faster. This time: faster, yes, but unrecognisably different…” And I see it in same way, we are facing different future than we used to. It’s a bit scary but on the other hand it is very exciting. In 30 years we will have nano-fabricators, which manipulate at the level of atoms and molecules, to produce anything you want, from dirt, air, water and cheap carbon-rich acetylene gas. As you may already feel, those ingredients are virtually free, hence production of the goods by fabricator is almost free. Probably food will be a bit more expensive, but also cheap. By the way, each fabber will be able to copy itself… from the same cheap ingredients. We will not need plenty of wood, coal, oil, gas for nanofabrication. This is good for ecology. But I think we will invent other ways how to spoil Earth.

The value will shift from equipment to the digital models of the goods. Advanced 3D (and 4D models) will be not free; the rest will be crowdsourced and available for free. Autodesk, not a new company, but one of those serious, is a pioneer there with 123D apps platform. They are moving together with MakerBot. You can buy MakerBot Replicator on Autodesk site and vice versa, you will get Autodesk software together with MakerBot you bought elsewhere. It’s how it all is starting. In few years it will take off at large scale. Then we will get different economy, with much personal, sustainable and sensational consumption.

It would be interesting to draw parallels with the creation of Artificial Intelligence, because in 2030 we should have human brain simulated on non-biological carrier. Or may be we will be able to 4D or 5D-print more powerful brains than human on biological, but non-human carrier? Stay tuned.

Next Five Years of Healthcare

This insight is related to all of you and your children and relatives. It is about the health and healthcare. I feel confident to envision the progress for five years, but cautious to guess for longer. Even next five years seem pretty exciting and revolutionary. Hope you will enjoy they pathway.

We have problems today

I will not bind this to any country, hence American readers will not find Obamacare, ACO or HIE here. I will go globally as I like to do.

The old industry of healthcare still sucks. It sucks everywhere in the world. The problem is in uncertainty of our [human] nature. It’s a paradox: the medicine is one of the oldest practices and sciences, but nowadays it is one of least mature. We still don’t know for sure why and how are bodies and souls operate. The reverse engineering should continue until we gain the complete knowledge.

I believe there were civilisations tens of thousands years ago… but let’s concentrate on ours. It took many years to start in-depth studying ourselves. Leonardo da Vinci did breakthrough into anatomy in early 1500s. The accuracy of his anatomical sketches are amazing. Why didn’t others draw at the same level of perfection? The first heart transplant was performed only in 1967 in Cape Town by Christiaan Barnard. Today we are still weak at brain surgeries, even the knowledge how brain works and what is it. Paul Allen significantly contributed to the mapping of the brain. The ambitious Human Genome project was performed only in early 2000s, with 92% of sampling at 99.99% accuracy. Today, there is no clear vision or understanding what majority of DNA is for. I personally do not believe into Junk DNA, and ENCODE project confirmed it might be related to the protein regulation. Hence there is still plenty of work to complete…

But even with the current medical knowledge the healthcare could be better. Very often the patient is admitted from the scratch as a new one. Almost always the patient is discharged without proper monitoring of the medication, nutrition, behaviour and lifestyle. There are no mechanisms, practices or regulations to make it possible. For sure there are some post-discharge recommendations, assignments to the aftercare professionals, but it is immature and very inaccurate in comparison to what it could be. There are glimpses of telemedicine, but it is still very immature.

And finally, the healthcare industry in comparison to other industries such as retail, media, leisure and tourism is far behind in terms of consumer orientation. Even automotive industry is more consumer oriented than healthcare today. Economically speaking, there must be transformation to the consumer centric model. It is the same winning pattern across the industries. It [consumerism] should emerge in healthcare too. Enough about the current problems, let’s switch to the positive things – technology available!

There could be Care Anywhere

We need care anywhere. Either it is underground in the diamond mine, or in the ocean on-board of Queen Mary 2, or in the medical center or at home, at secluded places, or in the car, bus, train or plane.

There is wireless network (from cell providers), there are wearable medical devices, there is a smartphone as a man-in-the-middle to connect with the back-end. It is obvious that diagnostics and prevention, especially for the chronical diseases and emergency cases (first aid, paramedics) could be improved.

I personally experienced two emergency landings, once by being on-board of the six hour flight, second time by driving for the colleague to another airport. The impact is significant. Imagine that 300+ people landed in Canada, then according to the Canadian law all luggage was unloaded, moved to X-ray, then loaded again; we all lost few hours because of somebody’s heart attack.

It could be prevented it the passenger had heart monitor, blood pressure monitor, other devices and they would trigger the alarm to take the pill or ask the crew for the pill in time. The best case is that all wearable devices are linked to the smartphone [it is often allowed to turn on Bluetooth or Wi-Fi in airplane mode]. Then the app would ring and display recommendations to the passenger.

4P aka Four P’s

The medicine should go Personal, Predictive, Preventive and Participatory. It will become so in five years.

Personal is already partially explained above. Besides consumerism, which is a social or economic aspect, there should be really biological personal aspect. We all are different by ~6 million genes. That biological difference does matter. It defines the carrier status for illnesses, it is related to risks of the illnesses, it is related to individual drug response and it uncovers other health-related traits [such as Lactose Intolerance or Alcohol Addiction].

Personal medicine is an equivalent to the Mobile Health. Because you are in motion and you are unique. The single sufficiently smart device you carry with you everywhere is a smartphone. Other wearable devices are still not connected [directly into the Internet of Things]. Hence you have to use them all with the smartphone in the middle.

The shift is from volume to value. From pay to procedures to pay for performance. The model becomes outcome based. The challenge is how to measure performance: good treatment vs. poor bedside, poor treatment vs. good bedside and so on.

Predictive is a pathway to the healthcare transformation. As healthcare experts say: “the providers are flying blind”. There is no good integration and interoperability between providers and even within a single provider. The only rationale way to “open the eyes” is analytics. Descriptive analytics to get a snapshot of what is going on, predictive analytics to foresee the near future and make right decisions, and prescriptive analytics to know even better the reasoning of the future things.

Why there is still no good interoperability? Why there is no wide HL7 adoption? How many years have gone since those initiatives and standards? My personal opinion is that the current [and former] interoperability efforts are the dead end. The rationale is simple: if it worth to be done, it would be already done. There might be something in the middle – the providers will implement interoperability within themselves, but not at the scale of the state or country or globally.

Two reasons for “dead interop”. First is business related. Why should I share my stuff with others? I spent on expensive labs or scans, I don’t want others to benefit from my investments into this patient treatment. Second is breakthrough in genomics and proteomics. Only 20 minutes needed to purify the DNA from the body liquids with Zymo Research DNA Kit. Genome in 15 minutes under $100 has been planned by Pacific Biosciences by this year. Intel invested 100 million dollars into Pacific Biosciences in 2008. Besides gene mechanisms, there are others, not related to DNA change. They are also useful for analysis, predicting and decision making per individual patient. [Read about epigenetics for more details]. There is a third reason – Artificial Intelligence. We already classify with AI, very soon will put much more responsibility onto AI.

Preventive is very interesting transformation, because it is blurring the boarders between treatment and behaviour/lifestyle/wellness and between drugs and nutrition. It is directly related to the chronic diseases and to post-discharge aftercare, even self aftercare. To prevent from readmission the patient should take proper medication, adjust her behaviour and lifestyle, consume special nutrition. E.g. diabetes patients should eat special sugar-free meal. There is a question where drug ends and where nutrition starts? What Coca Cola Diet is? First step towards the drugs?

Pharmacogenomics is on the rise to do proactive steps into the future, with known individual’s response to the drugs. It is both predictive and preventive. It will be normal that mass universal drugs will start to disappear, while narrowly targeted drugs will be designed. Personal drugs is a next step, when the patient is a foundation for almost exclusive treatment.

Participatory is interesting in the way that non-healthcare organisations become related to the healthcare. P&G produce sun screens, designed by skin type [at molecular level], for older people and for children. Nestle produces dietary food. And recall there are Johnson & Johnson, Unilever and even Coca Cola. I strongly recommend to investigate PWC Health practice for the insights and analysis.

Personal Starts from Wearable

The most important driver for the adoption of wearable medical devices is ageing population. The average age of the population increases, while the mobility of the population decreases. People need access to healthcare from everywhere, and at lower cost [for those who retired]. Chronic diseases are related to the ageing population too. Chronic diseases require constant control, interventions of physician in case of high or low measurements. Such control is possible via multiple medical devices. Many of them are smartphone-enabled, where corresponding application runs and “decides” what to tell to the user.

Glucose meter is much smaller now, here is a slick one from iBGStar. Heart rate monitors are available in plenty of choices. Fitness trackers and dietary apps are present as vast majority of [mobile health] apps in the stores. Wrist bands are becoming the element of lifestyle, especially with fashionably designed Jawbone Up. Triceps band BodyMedia is good for calories tracking. Add here wireless weight… I’ve described gadgets and principles in previous posts Wearable Technology and Wearable Technology, Part II. Here I’d like to distinguish Scanadu Scout, measuring vitals like temperature, heart rate, oxymetry [saturation of your hemoglobin], ECG, HRV, PWTT, UA [urine analysis] and mood/stress. Just put appropriate gadgets onto your body, gather data, analyse and apply predictive analytics to react or to prevent.

Personal is a Future of Medicine

If you think about all those personal gadgets and brick mobile phones as sub-niche within medicine, then you are deeply mistaken. Because the medicine itself will become personal as a whole. It is a five year transition from what we have to what should be [and will be]. Computer disappears, into the pocket and into the cloud. All pocket sized and wearable gadgets will miniaturise, while cloud farms of servers will grow and run much smarter AI.

Everybody of us will become a “thing” within the Internet of Things. IoT is not a Facebook [it’s too primitive], but it is quantified and connected you, to the intelligent health cloud, and sometimes to the physicians and other people [patients like you]. This will happen within next 5-10 years, I think rather sooner or later. The technology changes within few years. There were no tablets 3.5 years ago, now we have plenty of them and even new bendable prototypes. Today we experience first wearable breakthroughs, imagine how it will advance within next 3 years. Remember we are accelerating, the technology is accelerating. Much more to come and it will change out lives. I hope it will transform the healthcare dramatically. Many current problems will become obsolete via new emerging alternatives.

Predictive & Preventive is AI

Both are AI. Period. Providers must employ strong mathematicians and physicists and other scientists to create smarter AI. Google works on duplication of the human brain on non-biological carrier. Qualcomm designs neuro chips. IBM demonstrated brainlike computing. Their new computing architecture is called TrueNorth.

Other healthcare participatory providers [technology companies, ISVs, food and beverage companies, consumer goods companies, pharma and life sciences] must adopt strong AI discipline, because all future solutions will deal with extreme data [even All Data], which is impossible to tame with usual tools. Forget simple business logic of if/else/loop. Get ready for the massive grid computing by AI engines. You might need to recall all math you was taught and multiply it 100x. [In case of poor math background get ready to 1000x efforts]

Education is a Pathway

Both patients and providers must learn genetics, epigenetics, genomics, proteomics, pharmacogenomics. Right now we don’t have enough physicians to translate your voluntarily made DNA analysis [by 23andme] to personal treatment. There are advanced genetic labs that takes your genealogy and markers to calculate the risks of diseases. It should be simpler in the future. And it will go through the education.

Five years is a time frame for the new student to become a new physician. Actually slightly more needed [for residency and fellowship], but we could consider first observable changes in five years from today. You should start learning it all for your own needs right now, because you also must be educated to bring better healthcare to ourselves!

Information Technology Development

Past.

http://www.softserveinc.com/blogs/information-technology-development-part-1/

Present.

http://www.softserveinc.com/blogs/information-technology-development-part-2/

Future.

http://www.softserveinc.com/blogs/information-technology-development-part-3/

Mobile UX: home screens compared

35K views

Some time in 2010 I’ve published my insight on the mobile home screens for four platforms: iOS, Android, Winphone and Symbian. Today I’ve noticed it got more than 35K views:)

What now?

What changed since that time? IMHO Winphone home page is the best. Because it allows to deliver multiple locuses of attention, with contextual information within. But as soon as you go off the home screen, everything else is poor there. iOS and Android remained lists of dumb icons. No context, no info at all. The maximum possible is small marker about the number of calls of text messages. And Symbian had died. RIP Symbian.

So what?

Vendors must improve the UX. Take informativeness of Winphone home screen, add aesthetics of iOS graphics, add openness & flexibility of Android (read Android First) and finally produce useful hand-sized gadget.

Winphone’s home screen provides multiple locuses of attention, as small containers of information. They are mainly of three sizes. The smallest box has enough room to deliver much more context information than number of unread text messages. By rendering the image within the box we can achieve the kind of Flipboard interaction. You decide from the image whether you interested in that or not. It is second question how efficiently the box room is used. My conclusion that it is used inefficiently. There are still number of missed calls or texts with much room left unused:( I don’t know why the concept of the small contexts has been left underutilized, but I hope it will improve in the future. Furthermore, it could improve on Android for example. Android ecosystem has great potential for creativity.

May be I visualize this when get some spare time… Keep in touch here or Slideshare.

Web 3.0

What is a future of the Web?

Is it Semantic Web as long time ago smtb called it? Spend few minutes to read so diverse definitions of Web 3.0 on wiki and return back here. Nobody argues with all those predictions, all of them will happen at some point in the future. My favorite prediction is smth like Kevin Kelly made public at the end of 2007, called “Next 5000 Days of the Web”

All those devices and sensors that will suck data into the web are related to our mobile devices. From the Mobile World Congress 2012 I brought information, announced by Eric Schmidt, that soon we will have 50,000,000,000 connected devices. Only imagine that number, almost ten devices per person. It is really huge!

But what we have today?

Today we see the boom of mobile apps. It is similar to what we have with the boom of apps for PCs 20-25 years ago. The history repeats itself, slightly at different level. Now we have app boom for smaller devices than PC. Years ago we have premium vendor of the app platform – Apple, and commoditizer – Microsoft. Today we have the same, premium vendor – Apple, and new commoditizer – Google/Android. But the big picture is similar, the apps are booming, there is brand new community of developers and users of them. There are new business models emerging how to monetize on this new boom.

How is it related to the Web at all? The web is in place, it is inevitable and we are all in the web, but there are nuances;) Surfing the web with Mobile Web is not the same as using the Native App. For business applications Mobile Web is logical choice, it smoothly substitutes awkward MEAP solutions. It is not a surprise that Gartner did not identify any MEAP vendors as Leaders in is Magic Quadrant. There are niche players, visionaries, but there are no leaders. It was not easy, hence many walls were broken by Mobile Web. Enterprise love Mobile Web, it has emerged and gaining popularity. Is it Web 3.0? What is a difference between web app for desktop, tablet, phone? There is almost no difference. Just few additional features like geolocation available from the browser, camera and so on. But delivery model is the same, SaaS-like familiar from PC times. Hence it is not a revolution to be named Web 3.0.

Revolution happened.

Revolution seems to be this application boom on modern phones and tablets. It smells like revolution. This observable on apps like Instagr.am. Believe me or not, but Instagram was a threat to Facebook! Initially people published photos on Flickr or Picasa and sent link to the friends and colleagues to share them. With Facebook photo sharing feature, it got simplified, you just upload photos and there got shared automatically within your network. No need in Flickr or Picasa anymore? Then came Instagram, with opportunity to make pictures with the phone, apply some cool effect and instantly share, without connecting the device to the PC and without that annoying bulk upload. Instagram has a backend, synthesized from Facebook and Twitter, which is cool for the user. You don’t need Facebook anymore to share your pictures! Bingo!

Ok, Instagram is cool, Facebook even bought it to kill it as a competitor… But were is the web there? It is called Web Services. There is very rich and powerful web, full of clouds and web services. As Jeff Bezos once said, the future of the web was in Amazon Web Services. It is. We have got very popular S+S model, with native app on the phone/tablet and back end on AWS or so. There is good report by Vision Mobile that “Apps is a New Web“, dated 2010. We have got new ways of discovery of useful things, brand new UX, new monetization models. Enough arguments to call it New Web. May be not Web 3.0, but definitely it is no more Web 2.0.

To HTML5 Believers.

Those who hope on HTML5 as a standard, and return to old good SaaS approach could be pleased that for enterprises this works even today and will work tomorrow. But for the non-enterprise users it is not a case. First of all, all standards need few years (up to 5) to mature, after that the wide adoption happens. Second, hardware will evolve too. Web technologies will not keep the pace of hw evolution. Have you ever heard about new sensors planned for the new iPhone? E.g. infrared camera patent filled by Apple recently. It will serve for DRM, like preventing from recording the live show. It will serve to identify objects by infrared tags, instead of ugly QR tags. Infrared are invisible to the people, which means they are better, because they do not spoil the look of the object. OK, back to the infrared sensor – do you thing web tools like HTML will have support for infrared camera tomorrow? I think no. I even bet it will not. The pace of hardware is fast and web technologies will be few steps behind.

We have entered Web 3.0

New sensors like infrared camera will be added to the phones, tablets in the future. Other devices will emerge in the future. Recall 50,000,000,000 connected devices. There is no easy way to apply SaaS to all of them. There is strong M2M trend, observed during recent years. It is not Web 2.0 anymore. We have started from the user apps, now we are descending to the machine apps too… It is really smth brand new. I propose to call this new era Web 3.0. For semantic web we could chose another name, when it come. So far we are within smth new, and instead of calling it New Web, let’s call it Web 3.0.